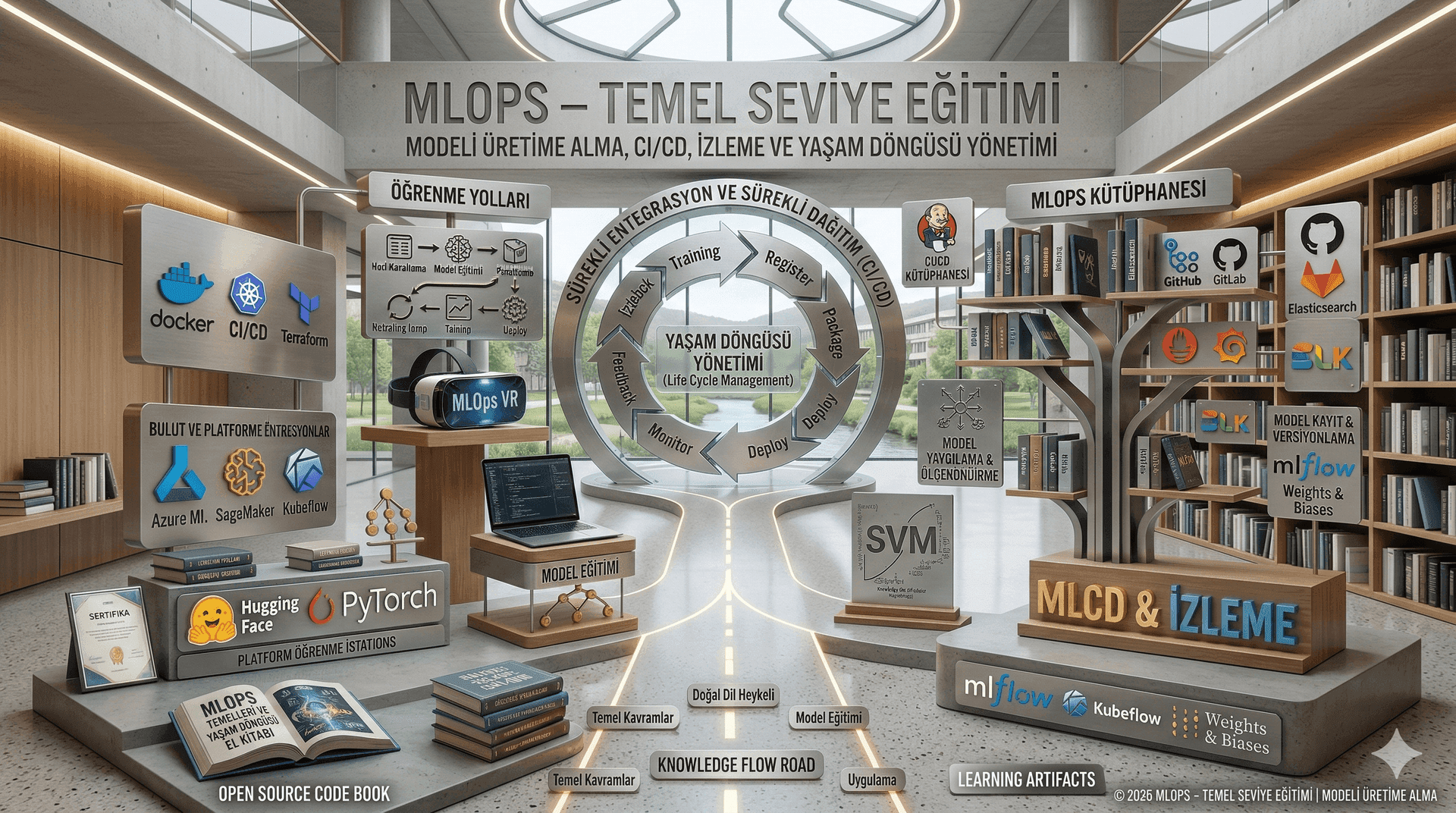

MLOps Fundamentals Training | Productionization, CI/CD, Monitoring & Model Lifecycle Management

MLOps fundamentals to productionize ML models: versioning, CI/CD, Docker/Kubernetes deployment, monitoring/logging, and lifecycle management.

About This Course

Training Methodology

End-to-end lifecycle focus: development → deployment → monitoring → improvement in one frame

Principles + tools: MLflow/DVC, Docker, Kubernetes, Prometheus/Grafana taught with “why/where to use” logic

Pipeline mindset: reproducibility, traceability, rollback, and validation gates

Production realism: latency, resource usage, incident handling, anomaly detection

Governance awareness: security, ethics, compliance, adversarial risks in production

Hands-on demo & cases: a simple reference pipeline to ground learning

Who Is This For?

Why This Course?

Learn reliable productionization and scalability patterns for ML models

Increase efficiency via automation across training, testing, deployment, and monitoring

Standardize collaboration between data science, engineering, and operations

Build continuous monitoring and update mechanisms for production performance

Establish sustainable lifecycle management with CI/CD foundations

Learning Outcomes

Requirements

Course Curriculum

1.1 Scope and Objectives of MLOps

1.1.1 What MLOps is (and is not)

1.1.2 “Model development” vs “ML product development”

1.1.3 Success criteria for production ML: reliability, sustainability, measurable impact

1.1.4 Why ML projects require “operations”: data and behavior variability

1.2 Relationship with DevOps and Key Differences

1.2.1 Code-centric lifecycle vs data+model-centric lifecycle

1.2.2 Non-deterministic training/inference and operational implications

1.2.3 “Offline good → Online bad”: distribution shift, label delay, feedback loops

1.2.4 Operational risk classes in ML: performance, security, compliance, cost

1.3 End-to-End ML Lifecycle

1.3.1 Data collection → preparation → training → evaluation

1.3.2 Packaging → serving → monitoring → retraining

1.3.3 Lifecycle artifacts: dataset, feature set, model artifact, pipeline run, deployment

1.3.4 Lifecycle roles: development, release, operations, governance

1.4 MLOps Component Map (Concept Map)

1.4.1 Data layer: sources, storage, access, schemas

1.4.2 Training layer: pipelines, experiment management, resource management

1.4.3 Model management: registry, stages, approval workflow

1.4.4 Deployment layer: serving, scaling, version transitions

1.4.5 Observability: metrics, logs, traces, alerts, dashboards

1.4.6 Governance: security, ethics, compliance, auditability

1.5 Common Anti-Patterns and Root-Cause Thinking

1.5.1 Notebook-only development → fragile production systems

1.5.2 Data leakage → wrong model selection

1.5.3 Train/serve skew → live performance degradation

1.5.4 No versioning → irreversible failures

1.5.5 No monitoring → “dark model” problem

Instructor

Şükrü Yusuf Kaya

AI Consultant & Instructor

Şükrü Yusuf KAYA is an internationally experienced AI Consultant and Technology Strategist leading the integration of artificial intelligence technologies into the global business landscape. With operations spanning 6 different countries, he bridges the gap between the theoretical boundaries of technology and practical business needs, overseeing end-to-end AI projects in data-critical sectors such as banking, e-commerce, retail, and logistics. Deepening his technical expertise particularly in Generative AI and Large Language Models (LLMs), KAYA ensures that organizations build architectures that shape the future rather than relying on short-term solutions. His visionary approach to transforming complex algorithms and advanced systems into tangible business value aligned with corporate growth targets has positioned him as a sought-after solution partner in the industry. Distinguished by his role as an instructor alongside his consulting and project management career, Şükrü Yusuf KAYA is driven by the motto of "Making AI accessible and applicable for everyone." Through comprehensive training programs designed for a wide spectrum of professionals—from technical teams to C-level executives—he prioritizes increasing organizational AI literacy and establishing a sustainable culture of technological transformation.

Frequently Asked Questions

Apply for Training

Boutique training with limited seats.

Pre-register for Next Groups

Leave your info to be the first to know when the next batch opens.

1-on-1 Mentorship

Book a private session.